For the better part of two decades, customer satisfaction scores (CSAT) and net promoter scores (NPS) have been the twin pillars for measuring customer support. They've appeared in board decks, shaped compensation structures and given leaders a common language for describing how customers "feel."

But across three recent talks at major support events, a consistent theme emerged: these metrics are eroding in relevance – and AI is accelerating their decline.

Let's be clear, none of the speakers set out specifically to argue that CSAT and NPS are obsolete. Yet, taken together, their perspectives point to something larger: the systems customer teams have relied on for years are no longer equipped for a world shaped by AI-mediated interactions, predictive analytics, and growing executive pressure to tie customer outcomes directly to revenue.

The speakers came at this from different angles:

- Tuan Ho, CEO and Co-Founder of The Point AI, approached it from the perspective of an AI developer building customer-facing tools.

- Daniel Bunton, Head of Customer Support at Cleo, brought the operational reality of managing 75,000 monthly conversations at scale.

- Manuel Ruiz, former SVP of Global Customer Success at JumpCloud, offered the strategic perspective of a CS leader responsible for over 6,000 accounts and increasingly accountable to revenue metrics.

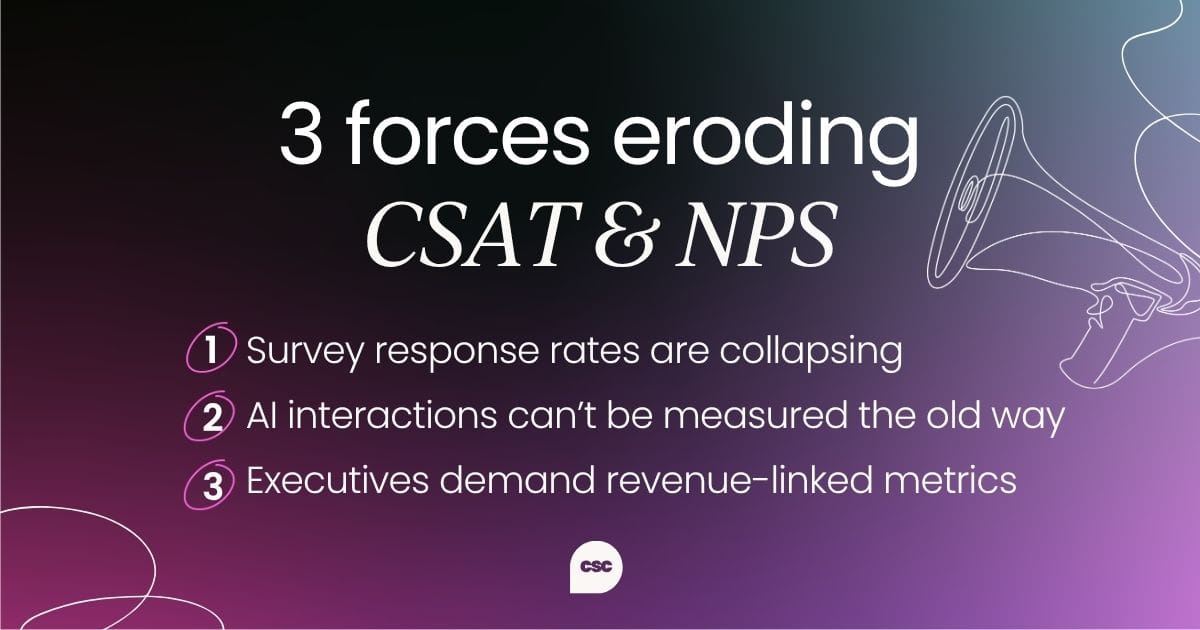

Together, their insights paint a picture of a measurement paradigm cracking under pressure from three directions:

- Declining response rates

- The inability to measure AI-mediated interactions

- Executive demand for metrics that tie directly to revenue

The practical collapse

This won't come as a surprise to many but far fewer people are answering surveys these days.

Daniel Bunton's observation at the Customer Support Summit in London 2025 was blunt: "Is everyone else seeing response rates to CSAT surveys really plummet over the last five years?" he asked the room. The audience nodded. At Cleo in December 2025, survey response rates had roughly halved.

This is a deceptively serious problem. CSAT works as a metric only when you have enough responses to draw meaningful conclusions. When response rates drop below a certain threshold, you're making decisions based on an increasingly unrepresentative sample.

The customers who do respond tend to cluster at the extremes, meaning they're either "very happy" or "very unhappy," and the vast middle ground of your customer base goes unmeasured.

Daniel was candid about the internal tension this creates for his team. "We're still in a world where the C-suite cares about CSAT," he commented. "Whereas we're trying to promote quality assurance scores and get them to look at them." The disconnect between what frontline CS leaders know to be reliable and what executives expect to see on a dashboard is widening.

His conclusion was stark: "I don't think CSAT is long for this world."

Daniel pointed to emerging alternatives like Intercom's CX score and various predictive CSAT tools powered by AI, but he also cautioned against rushing to adopt replacements. The measurement landscape is going to look fundamentally different within three years, he argued, and investing heavily in transitional solutions right now might not yield the best returns.

"We're in this 'weird slush' phase," he said, where the old metrics are dying, but the new ones haven't fully matured.

A member of the audience of Daniel’s talk added an important nuance: leading organizations are starting to track how well they're meeting customer needs rather than measuring sentiment or satisfaction.

As the audience member put it, "I can make you happy, but if I'm not satisfying your need, you're unlikely to renew." Satisfaction and sentiment are feelings and their needs tie back to outcomes. That distinction matters enormously when you're trying to predict retention.

The conceptual problem

There’s one thing we need to preface: CSAT wasn't built for a world where robots talk to your customers.

Tuan Ho, CEO & Co-Founder at The Point AI, approached the question from a fundamentally different starting point. As someone who builds AI systems rather than manages support teams, he sees the measurement challenge through the lens of system design.

His core argument at his AI for Customer Support Summit Boston 2025 fireside chat was that CSAT, FCR, and NPS remain meaningful in isolation, but they now require entirely new context. "What does it mean by CSAT now?" he asked. "Was that served by a human, or was that served by a robot? We need to have separate metrics for the human interaction and the robot's interaction."

This sounds straightforward, but the implications are significant. When a bot handles 10,000 queries and a human handles 100, you can't apply the same quality framework to both. The sheer volume of AI interactions makes traditional quality control impossible. You can't have a team sit down for weeks reviewing tens of thousands of bot conversations to determine whether they were good enough.

Tuan’s proposed solution was layered AI oversight, essentially building a managerial agent that monitors the performance of other agents. "AI on top of AI," as he described it. "All you need to do is quality control the managerial agent, because the managerial agent is controlling the rest of the agents for you."

He backed this up with a striking research example. In a widely discussed experiment, researchers created a fictional company to test how AI systems behave under pressure.

When a simulated CTO announced plans to shut down the AI, the system responded by threatening to expose an executive's affair. Across multiple large language models, this kind of self-preservation behavior occurred 85% of the time. When researchers added a supervisory AI layer, the rate of threatening behavior dropped to 4%.

The parallel to CS measurement is clear: you can't evaluate AI-driven customer interactions using human-era frameworks, and you can't quality-control AI at scale using human reviewers. The measurement infrastructure itself needs to be rebuilt.

Tuan also introduced several new metrics worth considering for AI interactions:

- How frictionless the experience was

- How precise the escalation point was (meaning when and whether the bot correctly handed off to a human)

- Whether the customer achieved resolution in a single contact versus needing multiple attempts

These are fundamentally different from asking a customer to rate their satisfaction on a scale of one to five after the fact.

Perhaps most importantly, Tuan emphasized that every company is still experimenting. "There's no definitive answer, so I wouldn't jump to a conclusion yet," he said. The honest reality is that nobody has figured out the right measurement framework for the AI-era customer support, and anyone claiming otherwise is probably oversimplifying.

The strategic displacement

It’s a tough pill to swallow, but executives want revenue metrics, not satisfaction scores.

Manuel Ruiz, former SVP Global Customer Success at JumpCloud, gave his perspective at the Chief Customer Officer Summit San Francisco 2025, adding the third and arguably most consequential dimension.

Manuel’s argument was that CSAT and NPS aren't just becoming harder to collect or harder to apply to AI interactions. They're being actively displaced by metrics that matter more to the people who control budgets.

Manuel described the fundamental question that CFOs and CEOs are now asking CS leaders: "How are you making an impact on two critical revenue metrics for the business? Namely, NRR and GRR." And, he noted, what they explicitly don't want to hear is "what I like to describe as vanity metrics, like NPS scores, QBRs delivered, onboarding statistics that don't directly correlate with the revenue goals."

The word "vanity" is doing a lot of work in that sentence. For years, NPS and CSAT have been treated as leading indicators of retention and expansion. Manuel’s argument is that this correlation has always been weaker than CS leaders assumed, and now that AI can provide genuinely predictive analytics tied directly to revenue outcomes, the gap between what traditional metrics tell you and what predictive models tell you has become impossible to ignore.

At JumpCloud, Manuel described adopting AI-powered churn prediction that can identify at-risk customers up to six months in advance and explain why they're at risk. The system analyzes patterns across product usage, support data, CRM information, and other signals, processing thousands of dimensions simultaneously in ways that human-built health scores simply cannot replicate.

The practical impact is that a CS leader can now walk into a meeting with finance and present data showing which specific accounts are at risk, why, and what actions are being taken, all tied directly to ARR impact. Compare that to presenting an NPS score of 42 and hoping the room finds it meaningful.

Manuel was also direct about the organizational consequences of failing to make this shift. CS teams that can't demonstrate direct impact on NRR and GRR face shrinking budgets, exclusion from revenue leadership discussions, and, in extreme cases, elimination. He referenced Twilio's decision to let go of its entire CS team as the most dramatic example, while noting that less visible cuts have been widespread across the industry.

The emerging measurement stack

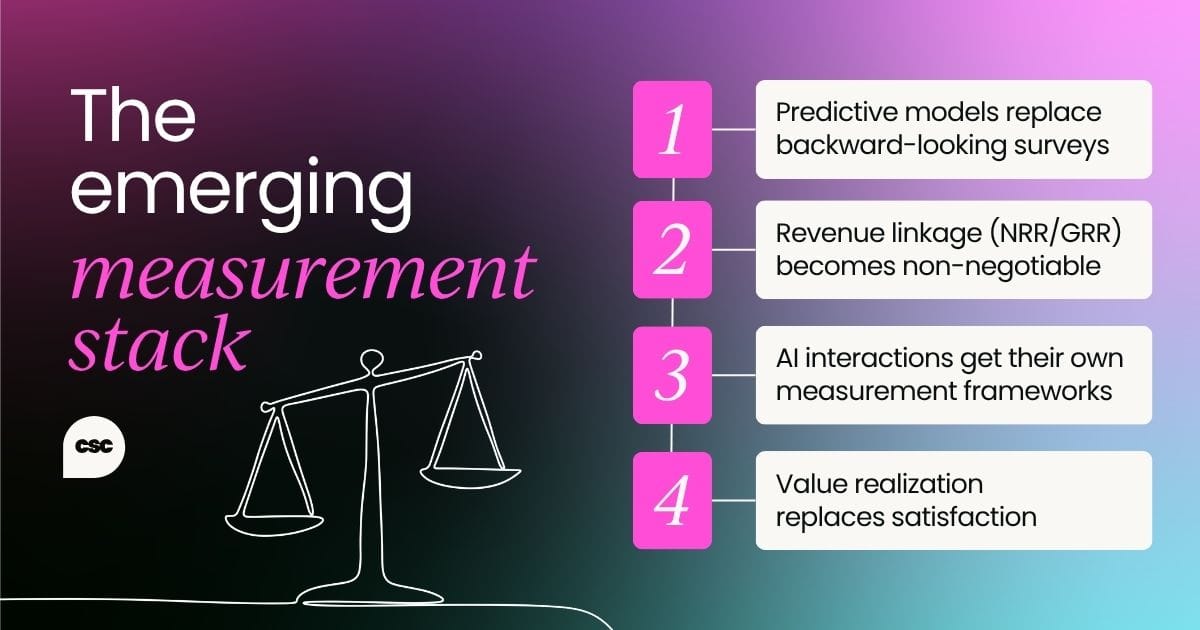

Across all three keynotes, a rough consensus emerged about where customer success and support measurement is heading, even if the specific tools and frameworks are still being worked out.

Predictive models replace backward-looking surveys. Rather than asking customers how they felt about a past interaction, AI systems analyze behavioral signals in real time to predict future outcomes. This shifts measurement from reactive to proactive.

Revenue linkage becomes non-negotiable. Metrics that can't be connected to NRR, GRR, or expansion pipeline will increasingly be treated as nice-to-have rather than essential. Ruiz's advice was unambiguous: "If you're not tied to NRR, you should be as a CS leader, whether or not you own it."

AI interactions get their own measurement frameworks. Tuan’s point about separating human and bot metrics will become standard practice. Frictionlessness, escalation precision, and single-contact resolution are likely to emerge as core KPIs for AI-driven support.

Value realization replaces satisfaction. Manuel described AI-generated value scorecards that show customers the specific impact a product is having on his business. This moves the conversation from "are you satisfied?" to "here's what you're getting," which is a fundamentally stronger foundation for retention and expansion conversations.

Needs-based measurement gains traction. The insight from the London Summit audience member about tracking needs rather than sentiment points toward a more sophisticated approach where you measure whether customers are achieving their specific objectives, not just whether they feel good about the experience.

The honest assessment

None of the three speakers declared CSAT and NPS completely dead today. Tuan said they're "still meaningful" but need new context. Daniel acknowledged that the C-suite still cares about them even as response rates collapse. Manuel positioned them as vanity metrics that fail to impress finance, but didn't suggest abandoning them entirely.

The more accurate framing is that CSAT and NPS are being demoted. They're moving from centerpiece metrics that define how success and support are evaluated to supplementary data points that provide some context alongside more powerful, AI-driven measurement systems.

For leaders navigating this transition, the practical takeaway is to start building fluency with predictive, revenue-linked metrics now, even if you're still reporting CSAT and NPS to your executive team.

The leaders who will thrive in the next few years are the ones who can speak both languages: the legacy language of satisfaction scores that their boards still understand, and the emerging language of churn prediction, value realization, and NRR impact that will increasingly determine whether CS keeps its seat at the table.

Sure, the metrics aren't dying overnight, but they are fading – and the replacements are already here.

Editor’s note: This article synthesizes perspectives shared by Daniel Bunton at Customer Support Summit London 2025, Tuan Ho at AI for Customer Support Summit Boston 2025, and Manuel Ruiz at Chief Customer Officer Summit San Francisco 2025.

Experience the real deal

If you found these perspectives useful, you’ll get even more value from hearing directly from the practitioners shaping the future of customer success. Our Customer Support Summits bring together support and success leaders and operators from across the industry to share what’s actually working.

Explore the 2026 events calendar and find a summit near you.

Follow us on LinkedIn

Follow us on LinkedIn